This week the hugely popular social networking platform Instagram,

announced the launch of its new features designed to tackle messages which

might be considered as bullying or harassment.

According to an article on the company’s blog ‘We can do more to prevent bullying from happening on Instagram, and we can do more to empower the targets of bullying to stand up for themselves’.

Adam Mosseri (Head of Instagram) says ‘ The intervention gives people a

chance to reflect and undo their comment and prevents the recipient from

receiving the harmful comment notification’.

This sounds great. Instagram are

right. They badly need to focus on abusive posts. The new feature tool encouraging the

opportunity for reflection and the chance to undo is very welcome. We’ve all

texted posted on impulse. And regretted

it later. The statement is however

misleading in that it will not always ‘prevent the recipient from receiving the

harmful comment notification’. Instagram

did however admit that at test it worked with ‘some people’. We’ll take that.

Some is better than none and it’s time reflection tools were introduced.

We’re big fans of social media and social media companies taking more

responsibility for what happens on their platforms so were pleased to see

Instagram making efforts in this area.

While bullying is an age old problem offline, online it happens

predominately via social networks and gaming so it’s vital the companies make

efforts here. Let’s face it, there are

very few social media platforms where some form of bullying, harassment or

abusive behavior doesn’t take place.

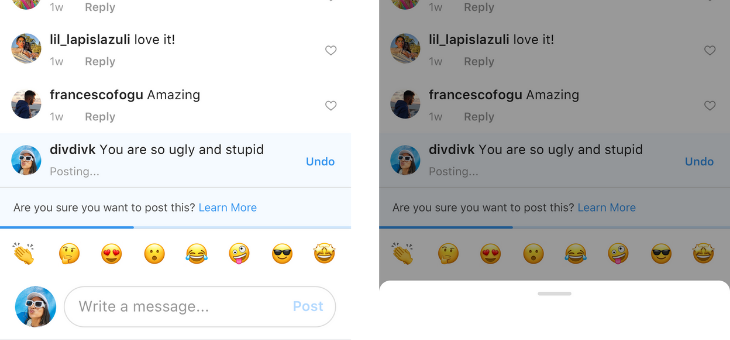

So let’s take a look at how

the new features work.

The first feature being rolled out claims to be ‘AI that notifies people when their comment may be considered offensive before it’s posted’ and supposedly detects ‘bullying and other types of harmful content in comments, photos and videos’.

So how effective is this?

Given that there are many different variations of words and also

messages contained in images and videos, sometimes this can be difficult to pick

up or detect.

Having worked on a previous project along content identification on a

huge scale, using algorithms, I know from experience this is something which is

difficult to get correct/right a lot of the time.

Secondly does it actually stop the bullying and other abuse?

Does it stop it, make the poster think or just give a subtle prompt?

The second feature called ‘Restrict’ is not something which is innovative

or new. In fact this feature has been

widely available for years (shadow banning) though again it’s good to see it

being used on a prolific platform like Insta.

The idea is that the person targeted with the abusive posts can prevent

them from being seen publicly. Their

messages are purged elsewhere. The main

reason for this feature is that the poster of abusive comments still thinks

they are posting publicly. When they get no engagement the idea is that they

will hopefully get bored/stop.

In a nutshell the ‘Restrict’ feature works along the same principal according

to Instagram ‘Once you Restrict someone, comments on your posts from that

person will only be visible to that person. You can choose to make a restricted

person’s comments visible to others by approving their comments’.

Anyone involved in any type of mental health or psychological sphere

will tell you that developing technology around these types of harassing or

bullying behaviours will take time and we’ll not see the impact for some time

and only then with a complex combination of both human processes and machine

learning. The examples used by

Instagram on posts use very basic language eg ‘You are so ugly’. In real online

life abusive language is a lot more nuanced and therefore harder for AI to

detect. Nonetheless, they are steps in the right direction.

>> Read the full article at waynedenner.com

Wayne Denner is a speaker, author and expert on Online Reputation and Wellbeing. Wayne helps young people protect and improve their digital presence. Visit waynedenner.com for more information.